Keyword analysis, high-quality content material, hyperlink constructing, on-page SEO—these ways assist you to enhance natural rankings. But search engine marketing methods will solely take you up to now.

Getting your technical SEO home so as is the ultimate piece of the puzzle in web site rating.

Technical SEO is about giving your searchers a top-quality internet expertise. People need fast-loading internet pages, secure graphics, and content material that’s straightforward to work together with.

In this text, we’ll discover what technical SEO is and the way it impacts web site development.

What is technical SEO (and the way does it influence development)?

Technical SEO is the method of constructing certain your web site meets the technical necessities of serps so as to enhance natural rankings. By enhancing sure parts of your web site, you’ll assist search engine spiders crawl and index your internet pages extra simply.

Strong technical SEO may also assist enhance your consumer expertise, improve conversions, and enhance your web site’s authority.

The key parts of technical SEO embrace:

- Crawling

- Indexing

- Rendering

- Site structure

Even with high-quality content material that solutions search intent, gives worth, and is ripe with backlinks, it is going to be tough to rank with out correct technical SEO in place. The simpler you make life for Google, the extra possible your web site will rank.

It’s been prompt that the pace of a system’s response ought to mimic the delays people expertise once they work together with each other. That means page responses should take around 1-4 seconds.

If your web site takes greater than 4 seconds to load, individuals might lose curiosity and bounce.

Page pace doesn’t simply influence consumer expertise, however may additionally have an effect on gross sales on ecommerce websites.

As Kit Eaton famously wrote in Fast Company:

“Amazon calculated {that a} web page load slowdown of only one second may price it $1.6 billion in gross sales every year. Google has calculated that by slowing its search outcomes by simply four-tenths of a second they may lose 8 million searches per day—that means they’d serve up many thousands and thousands fewer sponsored adverts.”

Proof {that a} slow-loading web site, only one factor of technical SEO, can straight influence enterprise development.

Should you utilize a technical SEO marketing consultant or do your personal audit?

You ought to conduct a technical SEO audit each few months. This helps you monitor technical elements (that we’re about to dive into), on-page elements like web site content material, and off-page elements like backlinks.

Tools like Ahrefs make operating your personal audit simpler. They’re additionally cheaper and sooner than hiring a technical SEO marketing consultant. A marketing consultant might take weeks and even months to full an audit, whereas conducting your personal can take hours or days.

That stated, professional insights from an skilled marketing consultant may be vital when you:

- Are deploying a brand new web site and need to make sure that it’s arrange correctly

- Are changing an previous web site with a brand new one and need to guarantee a seamless migration

- Have a number of web sites and wish insights into whether or not it’s best to optimize them seaprately or merge them collectively

- Run your internet pages in a number of languages and need to guarantee every model is correctly arrange

Either method, to get technical SEO proper, prioritize a well-rounded understanding of the fundamentals.

1. Crawling and indexing

Search engines want to give you the option to determine, crawl, render, and index internet pages.

Website architectures

Web pages that aren’t clearly linked to the homepage are more durable for serps to determine.

You possible received’t have any downside getting your homepages listed. But pages which are a number of clicks away from the homepage usually trigger crawling and indexing points for various causes, particularly if there are too few inside hyperlinks pointing to them.

Flat website architectures the place internet pages are clearly linked to one another helps keep away from this consequence:

Using a pillar web page construction with inside hyperlinks to related pages may also help serps index your web site’s content material. As homepages usually have the next web page authority, connecting them makes pages extra discoverable to crawling search engine spiders.

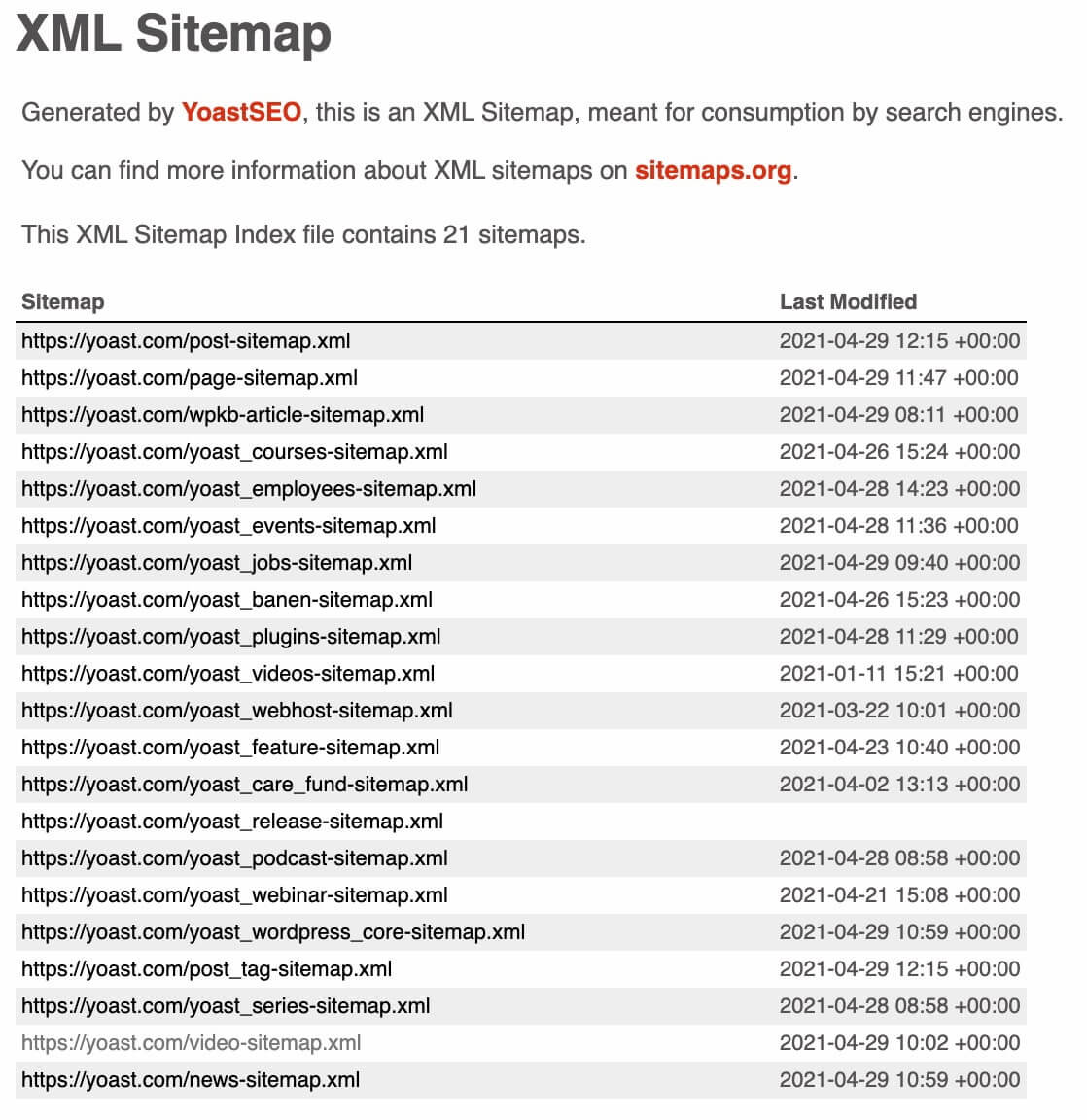

XML sitemaps

XML sitemaps present a listing of all an important pages in your web site. While they could appear redundant in lieu of cellular responsiveness and different rating elements, Google nonetheless considers them to be vital.

XML sitemaps let you know:

- When a web page was final modified

- How regularly pages are up to date

- What precedence pages have in your web site

Within Google Search Console, you’ll be able to examine the XML sitemap the search engine sees when crawling your web site. Here’s Yoast.com’s XML sitemap:

Use the next instruments to usually examine for crawl errors and to see if internet pages are listed by search engine spiders:

Screaming Frog

Screaming Frog is the trade gold customary for figuring out indexing and crawling points:

When you run it, it extracts the info out of your web site and audits for widespread points, like damaged redirects and URLs. With these insights, you may make fast modifications like fixing a 404 damaged hyperlink, or plan for longer-winded fixes like updating meta descriptions site-wide.

Coverage report and Check indexing with Google Search Console

Google Search Console has a useful Coverage report that tells you if Google is unable to absolutely index or render pages that you just want to be discovered:

Sometimes internet pages could also be listed by Google, however incorrectly rendered. That signifies that though Google can index the web page it’s not crawling 100% of the content material.

2. Site construction

Your web site construction is the inspiration of all different technical parts. It will affect your URL construction and robots.txt, which permits you to resolve which pages serps can crawl and which to ignore.

Many crawling and indexing points are a results of poorly thought-out web site constructions. If you’ll be able to construct a transparent web site construction that each Google and searchers can simply navigate you received’t have crawling and indexing points afterward.

In quick, easy web site constructions assist you to with different technical SEO duties.

A neat web site construction logically hyperlinks internet pages so neither people nor serps are confused concerning the pages’ order.

Orphan pages are these which are lower off from different pages due to not having inside hyperlinks. Site managers ought to keep away from these as a result of they’re weak to not being crawled and listed by serps.

Tools like Visual Site Mapper may also help you see your web site construction clearly and assess how effectively pages are linked to one another. A strong inside linking construction demonstrates the connection between your content material for Google and customers alike:

Breadcrumbs navigation

Breadcrumbs navigation is the equal of leaving numerous clues for serps and customers concerning the sort of content material they will anticipate.

Breadcrumbs navigation routinely provides inside hyperlinks to subpages in your web site:

This helps make clear your web site’s structure and makes it straightforward for people and serps to discover what they’re searching for.

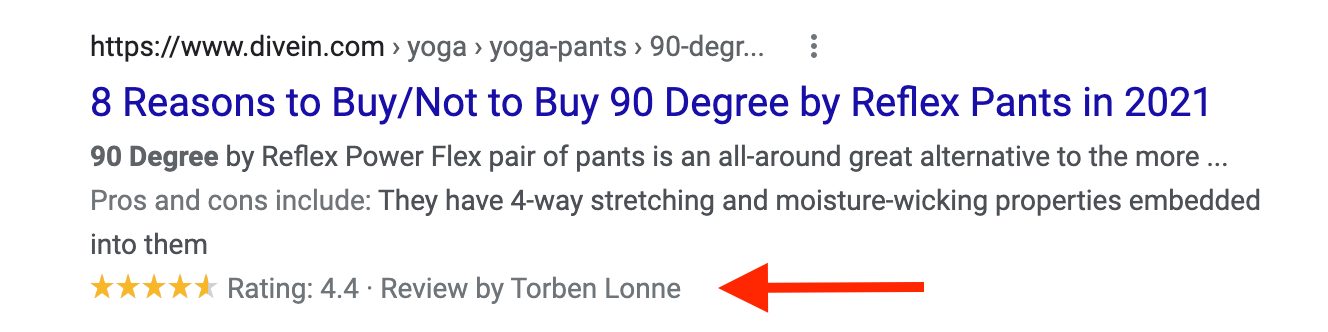

Structured knowledge

Using structured knowledge markup code may also help serps higher perceive your content material. As a bonus, it additionally permits you to be featured in wealthy snippets which might encourage extra searchers to click on by to your web site.

Here’s an instance of a overview wealthy snippet in Google:

You can use Google’s Structured Data Markup Helper to assist you to create new tags.

URL construction

Long sophisticated URLs that characteristic seemingly random numbers, letters, and particular characters confuse customers and serps.

URLs want to comply with a easy and constant sample. That method, customers and serps can simply perceive the place they’re in your web site.

On CXL, all of the pages on the webinar hub embrace the /webinar/subfolder to assist Google acknowledge that these pages fall below the “webinar” class:

Google clearly acknowledges it this fashion, too. If typing https://cxl.com/webinar/ into the search bar, Google pulls up related webinar outcomes that fall below this folder:

Site hyperlinks inside the outcomes additionally present that Google acknowledges the categorization:

3. Content high quality

High-quality content material is undoubtedly one in every of your web site’s strongest belongings. But there’s a technical aspect to content material, too.

Duplicate content material

Creating authentic, high-quality content material is a technique to guarantee websites don’t run into any duplicate content material points. Duplicate content material generally is a downside even the place the usual is usually excessive.

If a CMS creates a number of variations of the identical web page on completely different URLs, this counts as duplicate content material. All massive websites may have duplicate content material someplace—the difficulty is when the duplicate content material is listed.

To keep away from duplicate content material from harming your rankings, add the noindex tag to duplicate pages.

Within Google Search Console, you’ll be able to examine in case your noindex tags are arrange through the use of Inspect URL:

If Google remains to be indexing your web page, you’ll see a “URL is on the market to Google” message. That would imply that your noindex tag hasn’t been arrange accurately. An “Excluded by noindex tag” pop-up tells you the web page is now not listed.

Google might take a number of days or even weeks to recrawl these pages you don’t need listed.

Use robots.txt

If you discover examples of duplicate content material in your web site, you don’t have to delete them. Instead, you’ll be able to block serps from crawling and indexing it.

Create a robots.txt file to block search engine crawlers from pages you now not need to index. These could possibly be orphan pages or duplicate content material.

Canonical URLs

Most internet pages with duplicate content material ought to simply get a noindex tag added to them. But what about these pages with very related content material on them? These are sometimes product pages.

For instance, Hugo & Hudson sells merchandise for canines and hosts related pages with slight variations in dimension, coloration, and outline:

Without canonical tags, itemizing the identical product with slight variations would imply creating completely different URLs for every product.

With canonical tags, Google understands how to type the first product web page from the variations and doesn’t assume variant pages are duplicates.

4. Site pace

Page pace can straight influence a web page’s rating. If you possibly can solely deal with one factor of technical SEO, understanding what contributes to web page pace must be prime of the checklist.

Page pace and natural visitors

A quick-loading web site isn’t the one factor you want for high-ranking pages, however Google does use it as a rating issue.

Using Google PageSpeed Insights, you’ll be able to rapidly assess your web site’s loading time:

CDNs and cargo time

CDNs or content material supply networks cut back the general knowledge switch between the CDN’s cache server and the searcher. As it takes much less time to switch the file, the wait time decreases and the web page hundreds sooner.

But CDNs may be hit or miss. If they’re not arrange correctly, they will truly gradual your web site loading instances down. If you do set up CDNs, make sure that to take a look at your load instances each with and with out the CDN utilizing webpagetest.org.

Mobile responsiveness

Mobile-friendliness is now a key rating issue. Google’s mobile-friendly test is a straightforward usability take a look at to see in case your internet pages are operative and supply a great UX on a smartphone.

The internet app additionally highlights a number of issues web site managers can do to enhance their pages.

For instance, Google recommends utilizing AMPs (Accelerated Mobile Pages) that are designed to ship content material a lot sooner to cellular gadgets.

Redirects and web page pace

Redirects can enhance websites by guaranteeing there aren’t damaged hyperlinks main to dead-end pages or points surrounding hyperlink fairness and juice. But numerous redirects will trigger your web site to load extra slowly.

The extra redirects, the extra time searchers spend getting to the specified touchdown web page.

Since redirects decelerate web site loading instances, it’s vital to maintain the variety of web page redirects low. Redirect loops (i.e. when a number of redirects lead to the identical web page) additionally trigger loading points and error messages:

Large recordsdata and cargo time

Large file sizes like high-res photographs and movies take longer to load. By compressing them, you’ll be able to cut back load time and enhance the general consumer expertise.

Common extensions like GIF, JPEG, and PNG have numerous options for compressing photographs.

To save time, attempt compressing your photographs in bulk with devoted compression instruments like tinypng.com, and compressor.io.

Aim for PNG picture codecs as they have a tendency to obtain the very best quality to compression ratio.

WordPress plugins like SEO Friendly Images and Smush may also information you on how to compress your photographs:

When utilizing picture enhancing platforms, choosing the “Save for internet” choice may also help be certain that photographs aren’t too massive.

It’s a juggling act, although. It’s all very effectively compressing all of your massive recordsdata but when it negatively impacts consumer expertise by displaying a poor high quality internet web page, it could be higher not to compress the recordsdata within the first place.

Cache plugins and storing sources

The browser cache saves sources in a guests laptop once they frequent new web sites. When they return for a second time, these saved sources serve the wanted info at a sooner charge.

Combining W3 Total Cache’s caching and Autoptimize’s compression may also help you enhance your cache efficiency and cargo time.

Plugins

It’s straightforward to add a number of plugins to your web site—however take care not to overload it as they will add seconds to your pages’ load time.

Removing plugins that don’t serve an vital function will assist enhance your web page’s load time. Limit your web site to solely operating off plugins that add tons of worth.

You can examine if plugins are useful or redundant by Google Analytics. In a take a look at setting, manually flip plugins off (separately) and take a look at load pace.

You may also run reside exams to see if disabling sure plugins impacts conversions. For instance, turning off an opt-in field plugin and measuring if that impacts opt-in charge.

Alternatively, use a device like Pingdom tools to measure if plugins are affecting loading speeds.

Third-party scripts

On common, every third-party script provides an extra 34 seconds to web page load time.

While some scripts like Google Analytics are essential, your web site might have collected third-party scripts that might simply be eliminated.

This usually occurs if you replace your web site and don’t take away defunct belongings. Artifacts from previous iterations waste sources and have an effect on pace and UX. You can use a device like Purify CSS to take away pointless CSS, or guarantee your builders examine and purge persistently.

Conclusion

OPtimizing technical SEO isn’t a one-size-fits-all course of. There are parts that make sense for some web sites however not all, and this may differ primarily based in your viewers, anticipated consumer expertise, buyer journey, targets, and the like.

By studying the basics behind technical SEO you’ll be extra possible to create and optimize internet pages for Google and guests alike. You’ll additionally give you the option to carry out technical SEO audits or perceive an expert’s strategies.

Ready to outrank the competitors with technical SEO? Sign up for the CXL technical SEO course right now.