The technical components of your web site’s SEO are essential to search efficiency. Understand and keep them and your web site can rank prominently, drive visitors, and assist increase gross sales. Neglect them, and also you run the chance of pages not displaying up in SERPs.

In this text, you’ll learn the way to conduct a technical SEO audit to discover and repair points in your web site’s construction. We’ll have a look at key rating components together with content material, pace, construction, and mobile-friendliness to guarantee your website could be crawled and listed.

We’ll additionally present you the instruments you want to increase on-page and off-page SEO efforts and efficiency, and the way to use them.

Using a technical SEO audit to enhance your SEO efficiency

Think of a technical SEO audit as a web site well being test. Much such as you periodically assessment your digital advertising campaigns to get probably the most from them, a technical SEO audit evaluates site performance to determine areas for enchancment.

These areas fall into three classes:

1. Technical errors

Identifying purple flags within the again and front-end of your web site that negatively influence efficiency and, thus, your SEO. Technical errors embrace crawling points, damaged hyperlinks, sluggish website pace, and duplicate content material. We’ll have a look at every of those on this article.

2. UX errors

User expertise (UX) tends to be considered extra as a design concern reasonably than an SEO one. However, how your web site is structured will influence SEO efficiency.

To higher perceive what pages are vital and that are decrease precedence, Google makes use of an algorithm referred to as Page Importance.

Page Importance is decided by sort of web page, inside and exterior hyperlinks, replace frequency, and your sitemap. More importantly from a UX perspective, nevertheless, it’s decided by web page place. In different phrases, the place the web page sits in your website.

This makes web site structure an vital technical SEO issue. The more durable it’s for a consumer to discover a web page, the longer it would take Google to discover it. Ideally, a consumer ought to have the option to get to the place in as few clicks as possible.

A technical SEO audit addresses points with website construction and accessibility that stop them from doing this.

3. Ranking alternatives

This is the place technical SEO meets on-page SEO. As nicely as prioritizing key pages in your website structure, an audit helps persuade Google of a web page’s significance by:

- Identifying and merging content material concentrating on the identical or related key phrases;

- Removing duplicate content material that dilutes significance, and;

- Improving metadata in order that customers see what they’re on the lookout for in search engine outcomes pages (SERPs).

It’s all about serving to Google perceive your web site higher in order that pages present up for the suitable searches.

As with any sort of well being test, a technical SEO audit shouldn’t be a one-and-done factor. It must be performed when your web site is constructed or redesigned, after any adjustments in construction, and periodically.

The basic rule of thumb is to perform a mini-audit each month and a extra in-depth audit each quarter. Sticking to this routine will make it easier to monitor and perceive how adjustments to your web site have an effect on SEO efficiency.

6 instruments to make it easier to carry out a technical SEO audit

Here are the SEO instruments we’ll be utilizing to carry out a technical audit:

These instruments are free, except Screaming Frog which limits free plan customers to 500 pages.

If you run a massive web site with greater than 500 pages, Screaming Frog’s paid version provides unrestricted crawling for $149 per yr.

Alternatively, you should utilize Semrush’s Site Audit Tool (free for up to 100 pages) or Ahrefs Site Audit tool. Both carry out a related job, with the added advantages of error and warning flagging, and directions on how to repair technical points.

1. Find your robots.txt file and run a crawl report to determine errors

The pages in your web site can solely be listed if engines like google can crawl them. Therefore, earlier than operating a crawl report, have a look at your robots.txt file. You can discover it by including “robots.txt” to the tip of your root area:

https://yourdomain.com/robots.txt

The robots.txt file is the primary file a bot finds when it lands in your website. The info in there tells them what they need to and shouldn’t crawl by ‘permitting’ and ‘disallowing’.

Here’s an instance from the Unbounce web site:

You can see right here that Unbounce is requesting that search crawlers don’t crawl sure elements of its website. These are back-end folders that don’t want to be listed for SEO functions.

By disallowing them, Unbounce is ready to save on bandwidth and crawl budget—the variety of pages Googlebot crawls and indexes on a web site inside a given timeframe.

If you run a massive website with 1000’s of pages, like an ecommerce retailer, utilizing robots.txt to disallow pages that don’t want indexing will give Googlebot extra time to get to the pages that matter.

What Unbounce’s robots.txt additionally does is level bots at its sitemap. This is nice observe as your sitemap gives particulars of each web page you need Google and Bing to uncover (extra on this within the subsequent part).

Look at your robots.txt to ensure that crawlers aren’t crawling personal folders and pages. Likewise, test that you just aren’t disallowing pages that must be listed.

If you want to make adjustments to your robots.txt, you’ll discover it within the root listing of your webserver (for those who’re not accustomed to these recordsdata, it’s value getting assist from a net developer). If you employ WordPress, the file could be edited utilizing the free Yoast SEO plugin. Other CMS platforms like Wix allow you to make adjustments through in-built SEO tools.

Run a crawl report to test that your web site is indexable

Now that you realize bots are being given the proper directions, you may run a crawl report to test that pages you need to be listed aren’t being hampered.

Enter your URL into Screaming Frog, or by going to Index > Coverage in your Google Search Console.

Each of those instruments will show metrics in a completely different method.

Screaming Frog appears to be like at every URL individually, splitting indexing outcomes into two columns:

1. Indexability: This reveals the place a URL is indexable or non-indexable

2. Indexability standing: The purpose why a URL is non-indexable

The Google Search Console Index Coverage report shows the standing of each web page of your web site.

The report reveals:

- Errors: Redirect errors, damaged hyperlinks, and 404s

- Valid with warnings: Pages which might be listed however with points which will or might not be intentional

- Valid: Successfully listed pages

- Excluded: Pages excluded from indexing due to causes comparable to being blocked by the robots.txt or redirected

Flag and repair redirect errors to enhance crawling and indexing

All pages in your web site are assigned an HTTP standing code. Each code relates to a completely different operate.

Screaming Frog shows these within the Status Code column:

All being nicely, a lot of the pages in your web site will return a 200 standing code, which suggests the web page is OK. Pages with errors will show a 3xx, 4xx, or 5xx standing code.

Here’s an summary of codes you may see in your audit and the way to repair those that matter:

3xx standing codes

- 301: Permanent redirect. Content has been moved to a new URL and SEO worth from the previous web page is being handed on.

301s are advantageous, so long as there isn’t a redirect chain or loop that causes a number of redirects. For instance, if redirect A goes to redirect B and C to get to D it could actually make for a poor consumer expertise and sluggish web page pace. This can enhance bounce charge and damage conversions. To repair the difficulty you’ll want to delete redirects B and C in order that redirect A goes immediately to D.

By going to Reports > Redirect Chains in Screaming Frog, you may obtain the crawl path of your redirects and determine which 301s want eradicating.

- 302: Temporary redirect. Content has been moved to a URL quickly.

302s are helpful for functions comparable to A/B testing, the place you need to trial a new template or format. However, if 302 has been in place for longer than three months, it’s value making it a 301.

- 307: Temporary redirect due to change in protocol from the supply to the vacation spot.

This redirect must be used for those who’re certain the transfer is non permanent and also you’ll nonetheless want the unique URL.

4xx standing codes

- 403: Access forbidden. This tends to show when content material is hidden behind a login.

- 404: Page doesn’t exist due to a damaged hyperlink or when a web page or publish has been deleted however the URL hasn’t been redirected.

Like redirect chains, 404s don’t make for a nice consumer expertise. Remove any inside hyperlinks pointing at 404 pages and replace them with the redirected inside hyperlink.

- 410: Page completely deleted.

Check any web page displaying a 410 error to guarantee they’re completely gone and that no content material might warrant a 301 redirect.

- 429: Too many server requests in a quick area of time.

5xx standing codes

All 5xx standing codes are server-related. They point out that the server couldn’t carry out a request. While these do want consideration, the issue lies along with your internet hosting supplier or net developer, not your web site.

Set up canonical tags to level engines like google at vital pages

Canonical meta tags seem within the part in a web page’s code.

They exist to let search engine bots know which web page to index and show in SERPs when you have got pages with an identical or related content material.

For instance, say an ecommerce website was promoting a blue toy police automobile and that was listed below “toys > automobiles > blue toy police automobile” and “toys > police automobiles > blue toy automobile”.

It’s the identical blue toy police automobile on each pages. The solely distinction is the breadcrumb hyperlinks that take you to the web page.

By including a canonical tag to the “grasp web page” (toys > automobiles), you sign to engines like google that that is the unique product. The product listed at “toys > police automobiles > blue toy automobile” is a copy.

Another instance of while you’d need to add canonical tags is the place pages have added URL parameters.

For occasion, “https://www.yourdomain.com/toys” would present related content material to “https://www.yourdomain.com/toys?web page=2” or “https://www.yourdomain.com/toys?worth=descending” which were used to filter outcomes.

Without a canonical tag, engines like google would deal with every web page as distinctive. Not solely does this imply having a number of pages listed thus decreasing the SEO worth of your grasp web page, but it surely additionally will increase your crawl price range.

Canonical tags could be added immediately to the part within the web page code of further pages (not the principle web page) or for those who’re utilizing a CMS comparable to WordPress or Magneto, plugins like Yoast SEO that make the method easy.

2. Review your website structure and sitemap to make content material accessible

Running a website crawl helps to deal with a lot of the technical errors in your web site. Now we want to have a look at UX errors.

As we talked about on the prime, a consumer ought to have the option to get to the place they need to be in your website in a few clicks. An simpler human expertise is synonymous with a better search bot expertise (which, once more, saves on crawl price range).

As such, your website construction wants to be logical and constant. This is achieved by flattening your web site structure.

Here are examples of sophisticated website structure and easy (flat) website structure from Backlinko’s information on the subject:

You can see how a lot simpler it’s within the second picture to get from the homepage to every other web page on the positioning.

For a real-world instance, have a look at the CXL homepage:

In three clicks I can get to the content material I need: “How to Write Copy That Sells Like a Mofo by Joanna Wiebe.”

- Home > Resources

- Resources > Conversion charge optimization information

- Conversion charge optimization information > How to Write Copy That Sells Like a Mofo by Joanna Wiebe

The nearer a web page is to your homepage, the extra vital it’s. Therefore, it’s best to look to regroup pages based mostly on key phrases to convey these most related to your viewers nearer to the highest of the positioning.

A flattened web site structure must be mirrored by its URL construction.

For instance, once we navigated to “cxl.com/conversion-rate-optimization/how-to-write-copy-that-sells-like-a-mofo-by-joanna-wiebe/”, the URL adopted the trail we took. And by utilizing breadcrumbs, we are able to see how we bought there in order that we are able to simply get again.

To create a constant SEO technique and set up the connection between items of content material, use the hub-and-spoke methodology.

Portent describes this methodology as “an inside linking technique that includes linking a number of pages of associated content material (generally referred to as “spoke” pages) again to a central hub web page.”

In our instance, “Conversion charge optimization” information is the hub, “How to Write Copy That Sells Like a Mofo by Joanna Wiebe” is a spoke.

Depending on the dimensions of your web site, you could need assistance from a net developer to flatten the structure and overhaul navigation. However, you may enhance consumer expertise simply by including inside hyperlinks to related pages.

At the underside of “How to Write Copy That Sells Like a Mofo by Joanna Wiebe”, for example, you’ll discover hyperlinks to different spoke content material.

It will also be finished in physique content material, by linking to pages associated to particular key phrases.

“Optimization” within the above picture hyperlinks off to CXL’s conversion rate optimization guide.

Organize your sitemap to replicate your web site construction

The URLs that function in your website ought to match these in your XML sitemap. This is the file that it’s best to level bots to in your robots.txt as a information to crawl your web site.

Like robots.txt, you could find your XML sitemap by including “sitemap.xml” to the tip of your root area:

https://yourdomain.com/sitemap.xml

If you’re updating your website structure, your sitemap can even want updating. A CMS like WordPress, Yoast SEO, or Google XML sitemaps can generate and routinely replace a sitemap every time new content material is created. Other platforms like Wix and Squarespace even have built-in options that do the identical.

If you want to do it manually, XML-sitemaps will routinely generate an XML sitemap that you would be able to paste into your web site’s (/) folder. However, it’s best to solely do that for those who’re assured dealing with these recordsdata. If not, get assist from a net developer.

Once you have got your up to date sitemap, submit it at Index > Sitemaps within the Google Search Console.

From right here, Google will flag any crawlability and indexing points.

Working sitemaps will present a standing of “Success”. If the standing reveals “Has errors” or “Couldn’t fetch” there are probably issues with the sitemap’s content material.

As along with your robots.txt file, your sitemap shouldn’t embrace any pages that you just don’t need to function in SERPs. But it ought to embrace each web page you do need indexing, precisely the way it seems in your website.

For instance, in order for you Google to index “https://yourdomain.com/toys”, your sitemap ought to copy that area precisely, together with the HTTPS protocol. “http://yourdomain.com/toys” or “/toys” will imply pages aren’t crawled.

3. Test and enhance website pace and cell responsiveness

Site pace has lengthy been a think about search engine rankings. Google first confirmed as a lot in 2010. In 2018, they upped the stakes by rolling out mobile page speed as a rating think about cell search outcomes.

When rating a web site based mostly on pace, Google appears to be like at two information factors:

1. Page pace: How lengthy it takes for a web page to load

2. Site pace: The common time it takes for a pattern of pageviews to load

When auditing your website, you solely want to deal with web page pace. Improve web page load time and also you’ll enhance website pace. Google helps you do that with its PageSpeed Insights analyzer.

Enter a URL and PageSpeed Insights will grade it from 0 to 100. The rating relies on real-world discipline information gathered from Google Chrome browser customers and lab information. It can even counsel alternatives to enhance.

Poor picture, JavaScript, CSS file optimization, and browser caching practices have a tendency to be the culprits of sluggish loading pages. Fortunately, these are simple to enhance:

- Reduce the dimensions of photographs with out impacting on high quality with Optimizilla or Squoosh. If you’re utilizing WordPress, optimization plugins like Imagify Image Optimizer and TinyPNG do the identical job.

- Reduce JavaScript and CSS recordsdata by pasting your code into Minify to take away whitespace and feedback

- If you’re utilizing WordPress, use W3 Total Cache or WP Super Cache to create and serve a static model of your pages to searchers, reasonably than having the web page dynamically generated each time a particular person clicks on it. If you’re not utilizing WordPress, caching could be enabled manually in your website code.

Start by prioritizing your most vital pages. By going to Behavior > Site Speed in your Google Analytics, metrics will present how particular pages carry out on completely different browsers and international locations:

Check this towards your most considered pages and work by way of your website from the highest down.

How to discover out in case your web site is mobile-friendly

In March 2021, Google launched mobile-first indexing. It implies that pages Google indexes shall be based mostly on the cell model of your website. Therefore, the efficiency of your website on smaller screens could have the largest influence on the place your website seems in SERPs.

Google’s Mobile-Friendly Test device is a simple method to test in case your website is optimized for cell gadgets:

If you employ a responsive or mobile-first design, it’s best to don’t have anything to fear about. Both are developed to render on smaller screens and any adjustments you make as a results of your technical SEO audit will enhance website and search efficiency throughout all gadgets.

However, a responsive design doesn’t assure a nice consumer expertise, as Shanelle Mullin demonstrates in her article on why responsive design is not mobile optimization.

You can check your website on actual gadgets utilizing BrowserStack’s responsive tool:

Standalone cell websites ought to cross the Google check, too. Note that separate websites for cell and desktop would require you to audit each variations.

Another possibility for bettering website pace on cell is Accelerated Mobile Pages (AMPs). AMP is a Google-backed mission designed to serve customers stripped-down variations of net pages in order that they load sooner than HTML.

Google has tutorials and guidelines for creating AMP pages utilizing code or a CMS plugin. However, it’s vital to pay attention to how these will have an effect on your website.

Every AMP web page you create is a new web page that exists alongside the unique. Therefore, you’ll want to take into account how they match into your URL scheme. Google recommends utilizing the next URL construction:

http://www.instance.com/myarticle/amp

http://www.instance.com/myarticle.amp.html

You’ll additionally want to make sure that canonical tags are used to determine the grasp web page. This could be the AMP web page, however the authentic web page is most popular. This is as a result of AMP pages serve a fundamental model of your webpage that doesn’t permit you to earn advert income or entry the identical deep degree of analytics.

AMP pages will want to be audited in the identical method as HTML pages. If you’re a paid subscriber, Screaming Frog has options to make it easier to discover and repair AMP points. You can do that within the free model, however you’ll want to add your listing of pages.

5. Find and repair duplicate content material and key phrase cannibalization points to fine-tune SEO

By this stage, your content material audit has already begun. Adding canonical tags ensures grasp pages are being given SEO worth over related pages. Flattened website structure makes your most vital content material simple to entry. What we’re trying to do now could be fine-tune.

Review your website for duplicate content material

Pages that include an identical info aren’t at all times dangerous. The toy police automobile pages instance we used earlier, for example, are needed for serving customers related outcomes.

They turn into a difficulty when you have got an an identical web page to the one you’re attempting to rank for. In such instances, you’re making pages compete towards one another for rating and clicks, thus diluting their potential.

As nicely as product pages, duplicate content material points can happen for a number of causes:

- Reusing headers, web page titles, and meta descriptions to make pages seem an identical even when the physique content material isn’t

- Not deleting or redirecting an identical pages used for historic or testing functions

- Not including canonical tags to a single web page with a number of URLs

A website crawl will assist determine duplicate pages. Check content material for duplication of:

- Titles

- Header tags

- Meta descriptions

- Body content material

You can then both take away these pages or rewrite the duplicated components to make them distinctive.

Merge content material that cannibalizes related key phrases

Keyword cannibalization is like duplicate content material in that it forces engines like google to select between related content material.

It happens when you have got numerous content material in your website that ranks for a similar question. Either as a result of the subject is analogous otherwise you’ve focused the identical key phrases.

For instance, say you wrote two posts. One on “How to write a resume” optimized for the phrase “how to write a resume” and the opposite on “Resume writing suggestions” optimized for “resume writing.”

The posts are related sufficient for engines like google to have a onerous time determining which is most vital.

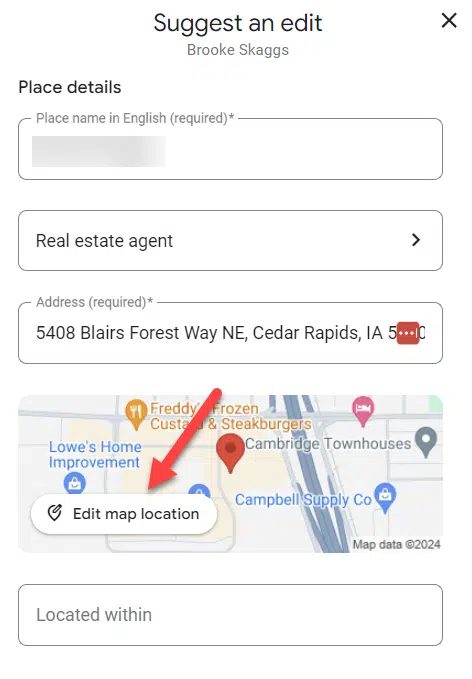

Googling “website: yourdomain.com + ‘key phrase” will make it easier to simply discover out if key phrase cannibalization is a drawback.

If your posts are rating #1 and #2, it’s not a drawback. But in case your content material is rating additional down the SERPs, or an older publish is rating above an up to date one, it’s in all probability value merging them:

- Go to the Performance part of your Google Search Console.

- From the filters click on New > Query and enter the cannibalized key phrase.

- Under the Pages tab, you’ll have the option to see which web page is receiving probably the most visitors for the key phrase. This is the web page that each one others could be merged into.

For instance, “How to write a resume” may very well be expanded to embrace resume writing suggestions and turn into a definitive information to resume writing.

It gained’t work for each web page. In some situations, it’s your decision to take into account deleting content material that’s not related. But the place key phrases are related, combining content material will assist to strengthen your search rating.

Improve title tags and meta descriptions to enhance your click-through charge (CTR) in SERPs

While title tags and meta descriptions aren’t a rating issue, there’s no denying they make a distinction to your curb enchantment. They’re basically a method to promote your content material.

Performing a technical SEO audit is the best time to optimize previous titles and descriptions, and fill in any gaps to enhance CTR in SERPs.

Titles and descriptions must be pure, related, concise, and make use of your goal key phrases. Here’s an instance from the search outcome for Copyhackers’ guide to copywriting formulas:

The meta description tells readers they’ll study why copywriting formulation are helpful and the way they are often utilized in the true world.

While the title can also be sturdy from an SEO perspective, it’s been truncated. This is probably going as a result of it exceeds Google’s 600-pixel restrict. Keep this restrict in thoughts when writing titles.

Include key phrases shut to the beginning of titles and check out to hold characters to round 60. Moz research suggests you may count on ~90% of your titles to show correctly if they’re beneath this restrict.

Similarly, meta descriptions must be roughly 155-160 characters to keep away from truncation.

It’s value noting that Google gained’t at all times use your meta description. Depending on the search question, they might pull a description out of your website to use as a snippet. That’s out of your management. But in case your goal key phrases are current in your meta tags, you’ll give your self an edge over different outcomes that go after related phrases.

Conclusion

Performing a technical SEO audit will make it easier to analyze technical components of your web site and enhance areas which might be hampering search efficiency and consumer expertise.

But following the steps on this article will solely resolve any issues you have got now. As your corporation grows and your web site evolves, and as Google’s algorithms change, new points with hyperlinks, website pace, and content material will come up.

Therefore, technical audits must be a part of an ongoing technique, alongside on-page and off-page SEO efforts. Audit your web site periodically, or everytime you make structural or design updates to your web site.